From Automation to Control: Architecting the Software-Defined Grid

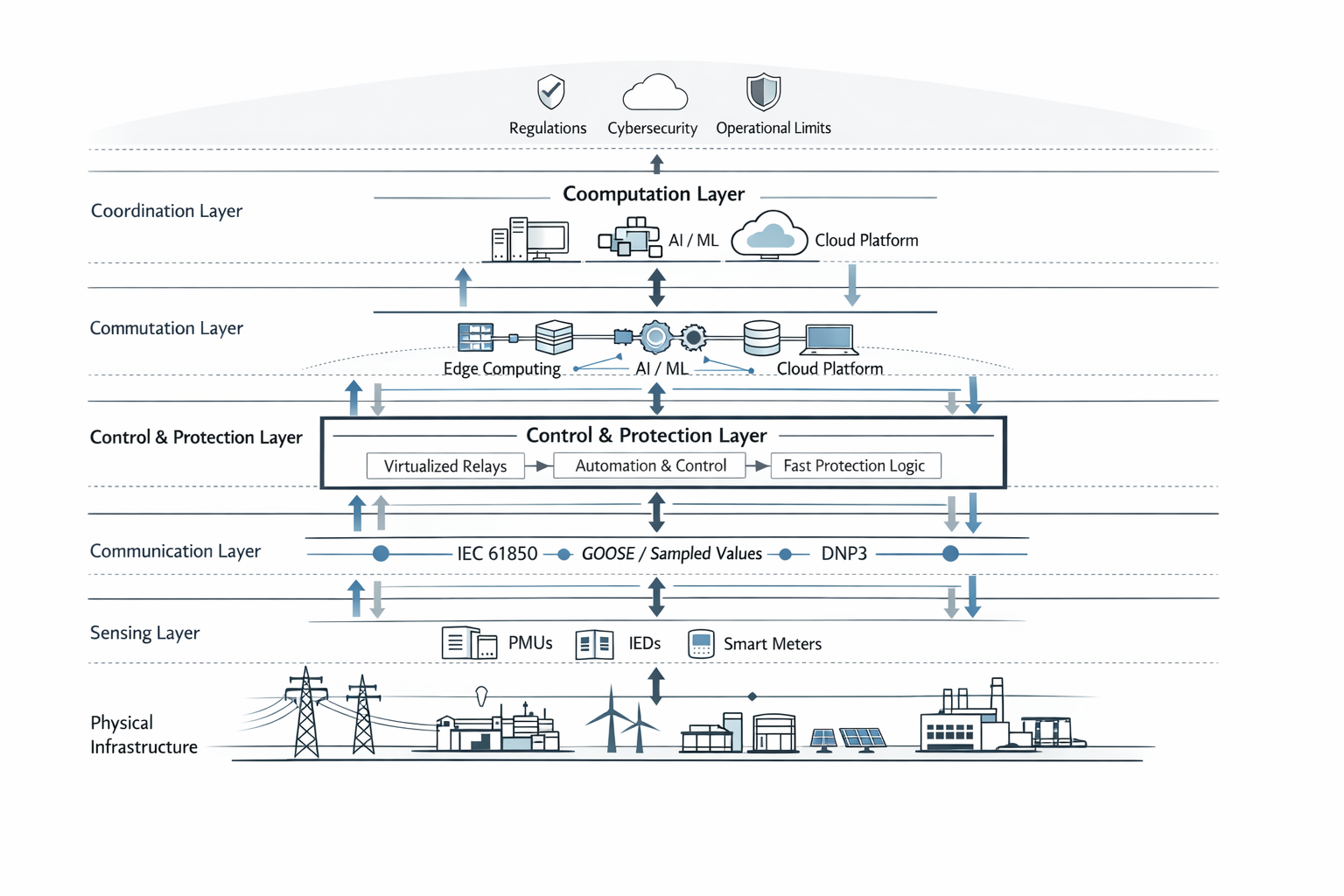

The grid is shifting from hardware-based control to software-defined systems. AI, electrification, and distributed energy are driving this change, introducing new risks and requiring integrated, adaptive control across sensing, communication, and computation layers.

For most of its history, the electric grid operated as a physically constrained system in which control was embedded directly in equipment design. System behavior was determined by network topology, electromechanical dynamics, and protection schemes implemented through dedicated hardware. Stability was achieved through deterministic responses defined by fixed settings and well-understood operating limits.

This foundation is now changing. Control functions are progressively separating from physical devices and being implemented in software-based systems. Protection logic is increasingly configurable. Substations are evolving into networked environments. Communication systems have become integral to protection and control performance rather than auxiliary support layers. These developments indicate a structural transition in how the grid is designed and operated.

This article characterizes this transition as a shift to programmable control architectures in which grid behavior is determined not only by physical infrastructure but by the design of control across sensing, communication, computation, and actuation layers. Within this framework, grid behavior is determined not only by physical infrastructure but by the architecture of control systems spanning sensing, communication, computation, and actuation layers. The doctrine provides a technical lens for analyzing how software-defined automation alters system performance, risk, and operational constraints.

Figure 1. Layered control architecture of a software-defined electric grid. Control is distributed across sensing, communication, and computation layers, with bidirectional feedback between physical infrastructure and software-defined decision systems.

Three concurrent developments are driving the shift to this framework. Distributed energy resources are increasing system decentralization and bidirectional power flows. Electrification is introducing new load profiles with different temporal and spatial characteristics. Artificial intelligence is creating large-scale computational demand that is not directly correlated with traditional consumption patterns. These factors are reducing the effectiveness of legacy planning assumptions and increasing the need for adaptive control strategies.

Current industry responses have focused on digitization, including deployment of sensors, advanced communications, and data analytics. However, digitization alone does not address the underlying architectural changes. The critical issue is how control is structured across increasingly interconnected and software-dependent systems.

This article examines the implications of this framework for grid architecture, operational risk, and system design. It focuses on how software-defined control modifies system behavior, introduces new failure modes, and requires integrated approaches to sensing, communication, and control. The objective is to provide a technical foundation for understanding the transition from hardware-defined to software-defined grid operations.

The Rise of Software-Defined Automation

In the traditional substation, function followed form. Protection relays were discrete devices, each responsible for a specific zone of the system. Control logic was embedded in hardware. Communication occurred through hardwired connections, designed for reliability rather than flexibility. These systems were robust, but they were also rigid. Changing a protection scheme often meant rewiring panels or replacing equipment.

The emergence of digital substations began to loosen these constraints. With the adoption of IEC 61850, the industry gained a common language for describing and exchanging information. Logical nodes replaced fixed wiring. Protection schemes could be expressed in software, and devices from different manufacturers could communicate over standardized networks.¹ The substation started to look less like a collection of devices and more like an information system.

This shift did not happen all at once. It unfolded gradually, as utilities experimented with new configurations and vendors developed interoperable products. Yet the direction was clear. Functions that had once been inseparable from hardware were becoming abstract, portable, and reconfigurable.

The same transformation had already occurred in other sectors. In telecommunications, the separation of control from physical infrastructure enabled rapid innovation and scalability.² In computing, virtualization allowed multiple applications to run on shared hardware, dramatically increasing efficiency. The grid is now following a similar path, though with far higher stakes.

Virtualization represents the next step in this evolution. Protection, control, and monitoring functions can now be deployed as software applications running on general-purpose computing platforms.³ Instead of a one-to-one mapping between device and function, a single platform can host multiple services, each interacting through defined interfaces.

This creates new possibilities. Utilities can deploy updates without replacing hardware. New functionalities can be introduced more quickly. Systems can be scaled and reconfigured in response to changing conditions. At the same time, these advantages come with new dependencies. The performance of protection schemes now depends not only on electrical conditions, but on computing resources, network latency, and software reliability.

The grid begins to resemble a distributed computing system. Data flows from sensors to processors, from edge devices to centralized platforms, and back again as control signals. Cloud and edge computing architectures extend this capability, allowing analysis and coordination across wide geographic areas.⁴ What was once a localized system of control is becoming a layered architecture of decision-making.

Communication as Infrastructure

With the adoption of IEC 61850, communication within the substation is no longer an afterthought. It is the backbone of operation. Protocols such as GOOSE and Sampled Values enable high-speed exchange of information, allowing protection and control actions to occur with precision and coordination.⁵

The implications are profound. Interoperability becomes achievable, as devices from different vendors can operate within the same framework. Physical complexity is reduced, as wiring gives way to networked communication. Yet this shift also introduces a new reality: the communication network itself becomes part of the protection system.

Latency, packet loss, and synchronization are no longer secondary concerns. They directly affect system performance. A delayed message can be the difference between isolating a fault and allowing it to propagate. The reliability of the grid becomes intertwined with the reliability of its communication infrastructure.

As the grid evolves, the communication layer extends beyond substations into the broader network. Advanced metering infrastructure, distributed energy resources, and grid-edge devices all contribute to a growing web of data and control.⁶ This creates a system with multiple layers of interaction, each operating on different timescales and with different objectives.

Local devices respond to immediate conditions. Substations coordinate regional flows. System operators manage broader stability. Increasingly, these layers are connected through data and software, enabling new forms of optimization but also new pathways for failure.

The grid is no longer a linear system. It is a network of networks, where information and control move alongside electricity.

Artificial Intelligence and Algorithmic Demand

For decades, electricity demand followed patterns that could be understood through human behavior. Peaks occurred during the day, valleys at night. Seasonal changes reflected heating and cooling needs. Industrial demand tracked economic activity.

Artificial intelligence introduces a different pattern. Data centers supporting AI workloads operate continuously, often at high utilization. Their growth is driven not by population or weather, but by advances in computing and software.⁷ New facilities can appear rapidly, bringing with them large, concentrated loads that strain local infrastructure.

This form of demand does not wait for planning cycles. It moves at the pace of technology development, creating a mismatch between infrastructure deployment and load growth.

The implications for grid operation are significant. Forecasting becomes more uncertain, as traditional models struggle to capture the behavior of algorithmic demand. Infrastructure must be built faster, often under conditions of incomplete information. Load concentration creates localized stress, even as overall system capacity appears sufficient.

Utilities are beginning to encounter these challenges in real time. Large data center projects are forcing reconsideration of interconnection processes, planning assumptions, and cost allocation. The grid is being asked to adapt not just to new technologies, but to a new logic of demand.

Changing Failure Modes in a Software-Defined Grid

Protection systems have long been built on deterministic logic. Engineers defined conditions under which specific actions would occur, and systems were tested to ensure predictable responses. This approach worked well in a world where system behavior was relatively stable and well understood.

As the grid becomes more software-defined and incorporates adaptive systems, this certainty begins to erode. Decisions may be influenced by data-driven models, machine learning algorithms, and distributed coordination. Outcomes become less predictable, even as systems become more capable.

New forms of risk emerge in this environment. Automated systems can propagate errors at speeds that exceed human response times. Complex algorithms can make decisions that are difficult to interpret, creating challenges for oversight and accountability. Interconnected systems can amplify local disturbances into broader disruptions.

These risks are not unique to the grid. Similar patterns have been observed in financial systems and cloud infrastructure, where tightly coupled, software-driven processes can lead to rapid and unexpected failures. The grid, however, carries a different level of consequence. Its failures are physical, immediate, and visible.

Toward Mission-Based Grid Architecture

To navigate this transition, a different approach to system design is required. Rather than focusing on individual components, a mission-based framework organizes the grid around operational objectives. These missions define what the system must achieve under specific conditions, whether maintaining stability under high renewable penetration, integrating large-scale computational demand, or ensuring resilience during extreme events.

This perspective shifts the focus from devices to outcomes. It asks not what each component does, but how the system performs as a whole.

In a mission-based architecture, sensing, communication, and control are designed as an integrated system. Sensors provide the data needed to understand conditions. Communication networks ensure that information is delivered reliably and on time. Control systems translate that information into coordinated action.

This integration requires alignment across technologies and organizations. Utilities, vendors, and regulators must work within a shared framework, where interfaces are defined and responsibilities are clear. The result is a system that can adapt to changing conditions while maintaining coherence.

Innovation Beyond Proof-of-Concept

The industry is currently in a period of experimentation. Proof-of-concept projects test new technologies in controlled environments, demonstrating feasibility and building confidence. These efforts are necessary, but they often remain isolated, failing to translate into widespread deployment.

Moving beyond this phase requires a focus on architecture. Technologies must be integrated into existing systems in a way that is scalable, reliable, and aligned with regulatory requirements. This involves standardization, interoperability, and long-term planning.

Innovation is no longer about individual breakthroughs. It is about how those breakthroughs are combined and operationalized.

Governance as a Design Requirement

As control becomes more distributed and autonomous, governance cannot remain external to the system. It must be embedded within it. This includes defining operating boundaries, ensuring transparency, and providing mechanisms for oversight.

These constraints are not limitations. They are essential for maintaining trust and reliability in a system that is becoming more complex and less predictable.

Regulatory and institutional frameworks must evolve alongside technological change. Standards must be updated, planning processes revised, and accountability clarified. This is not a simple task, as it requires coordination across multiple stakeholders with differing priorities.

Yet without this alignment, technological progress will outpace the structures designed to manage it.

Designing Control in an Intelligent Infrastructure

The electric grid is entering a phase that is both promising and uncertain. Advances in technology offer new capabilities, from improved efficiency to enhanced resilience. At the same time, they introduce new complexities and risks that cannot be addressed through incremental change.

The central challenge is clear. It is not enough to deploy new technologies. The architecture of control must be defined, implemented, and governed.

Those who lead this effort will shape the future of the grid. They will determine how it operates, how it responds to change, and how it evolves in the face of forces that were not part of its original design.

The question is no longer whether the grid will become software-defined. That transition is already underway. The question is who will define how it is controlled.

Notes

- IEC 61850 Standard, International Electrotechnical Commission.

- N. McKeown et al., “OpenFlow: Enabling Innovation in Campus Networks,” ACM SIGCOMM, 2008.

- EPRI, “Virtualization in Power Systems,” 2023.

- OECD, “Data and the Future of Energy Systems,” 2022.

- IEC 61850 GOOSE Messaging Specifications.

- NERC, “State of Reliability,” 2024.

- IEA, “Electricity 2025: Analysis and Forecast,” 2025.

- U.S. Department of Defense, “Mission Engineering Guide,” 2020.

- EPRI, “Digital Substation Implementation Case Studies,” 2024.

Bibliography

Ayala S., Melvin, Galdenoro Botura Jr., and Oscar A. Maldonado A.

“AI Automates Substation Control.” IEEE Computer Applications in Power (2002).

https://www.researchgate.net/publication/3284889_AI_automates_substation_control

Diao, Ruisheng, Zhenyu Huang, Jianhui Wang, and Yilu Liu.

“Autonomous Voltage Control for Grid Operation Using Deep Reinforcement Learning.” 2019.

https://arxiv.org/abs/1904.10597

Electric Power Research Institute (EPRI).

Virtualization and Software-Defined Architectures in Power Systems. Palo Alto, CA: EPRI, 2023.

Electric Power Research Institute (EPRI).

Digital Substation Implementation Case Studies. Palo Alto, CA: EPRI, 2024.

Federal Energy Regulatory Commission (FERC).

State of the Markets Report 2024. Washington, DC: FERC, 2025.

https://www.ferc.gov/news-events/news/SMR-2024

International Energy Agency (IEA).

Electricity 2025: Analysis and Forecast to 2027. Paris: IEA, 2025.

International Electrotechnical Commission (IEC).

IEC 61850: Communication Networks and Systems for Power Utility Automation. Geneva: IEC.

McKeown, Nick, Tom Anderson, Hari Balakrishnan, Guru Parulkar, Larry Peterson, Jennifer Rexford, Scott Shenker, and Jonathan Turner.

“OpenFlow: Enabling Innovation in Campus Networks.” ACM SIGCOMM Computer Communication Review 38, no. 2 (2008): 69–74.

https://ccr.sigcomm.org/online/files/p69-v38n2n-mckeown.pdf

North American Electric Reliability Corporation (NERC).

State of Reliability 2024: Technical Assessment. Atlanta, GA: NERC, 2024.

https://www.nerc.com/globalassets/programs/rapa/pa/nerc_sor_2024_technical_assessment.pdf

North American Electric Reliability Corporation (NERC).

State of Reliability 2024: Overview. Atlanta, GA: NERC, 2024.

https://www.nerc.com/globalassets/programs/rapa/pa/nerc_sor_2024_overview.pdf

North American Electric Reliability Corporation (NERC).

2024 Long-Term Reliability Assessment. Atlanta, GA: NERC, 2024.

https://www.nerc.com/globalassets/our-work/assessments/2024-ltra_corrected_july_2025.pdf

North American Electric Reliability Corporation (NERC).

Characteristics and Risks of Emerging Large Loads. Atlanta, GA: NERC, 2025.

https://www.nerc.com/globalassets/who-we-are/standing-committees/rstc/whitepaper-characteristics-and-risks-of-emerging-large-loads.pdf

Organisation for Economic Co-operation and Development (OECD).

Data and the Future of Energy Systems. Paris: OECD, 2022.

Othman, Noor Mohd Fadzli Bin, et al.

“Realizing Electrical Digital Twin via Intelligent Substation.” Proceedings of the IEOM Conference, 2023.

https://ieomsociety.org/proceedings/2023australia/126.pdf

U.S. Department of Defense.

Mission Engineering Guide. Washington, DC: Office of the Under Secretary of Defense for Research and Engineering, 2020.

U.S. Department of Energy.

“Domestic Energy Usage from Data Centers Expected to Double or Triple by 2028.” Washington, DC, 2024.

https://www.energy.gov/articles/doe-releases-new-report-evaluating-increase-electricity-demand-data-centers

“Artificial Intelligence–Driven Protocol for Secure and Automated Substation Operations.”

Engineering Applications of Artificial Intelligence, 2025.

https://www.sciencedirect.com/science/article/pii/S0952197625016690

.