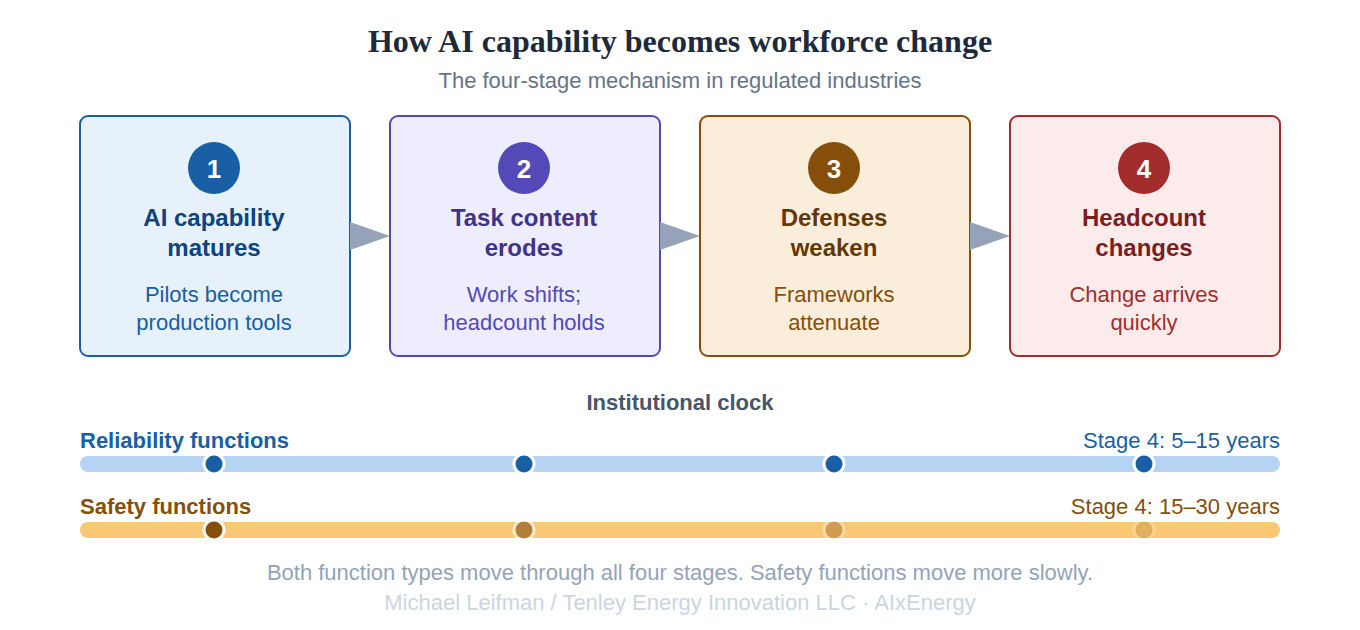

How AI reaches the US electricity workforce depends less on what the technology can do than on how regulated industries absorb new capability in practice. The process has structure. It plays out in stages, each with its own pace, and the stages differ systematically between the two kinds of work discussed in Part 1: the reliability functions whose failures are bounded and recoverable, and the safety functions whose failures kill people.

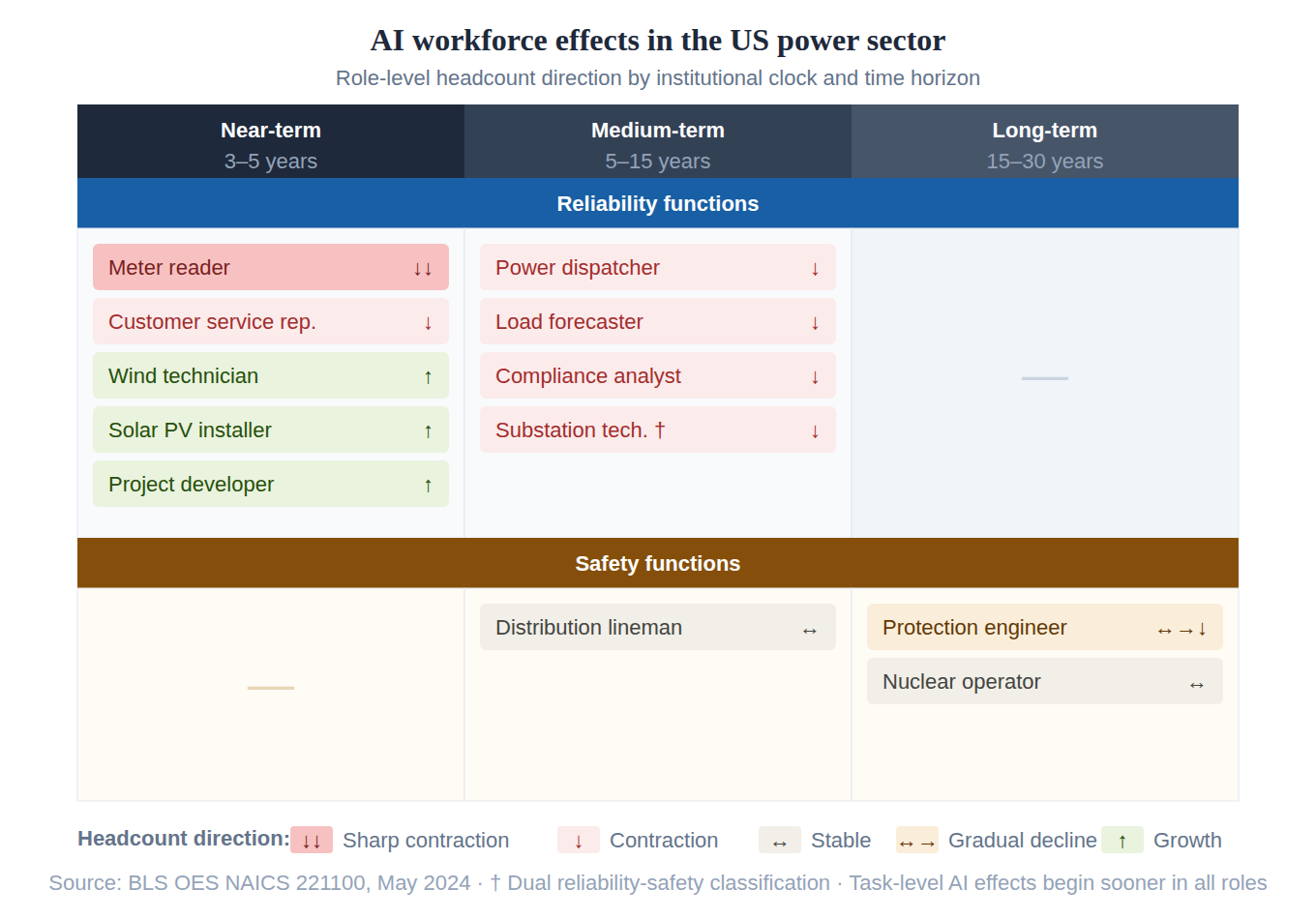

The twelve-role sample in Part 1 showed a pattern that follows from that structural difference: reliability roles change on near and medium horizons, safety roles change on medium and long horizons, and the task content inside every role is already shifting even where role-level headcount is steady or growing. What produces that timing?

The translation from AI capability to workforce change in a regulated industry is not a single event. It is a multi-stage process, and each stage has its own pace. The stages are analytically cleaner than real life ever is, but the cleanliness is part of their utility: each stage has a distinct set of signals, and knowing which stage a given role is in tells you what to watch for next.

Four stages

In the first stage, AI capability matures inside a specific role through pilot deployments, vendor partnerships, and early operational integrations that move the technology from controlled demonstration to working production tool. Capability in the lab and capability in a production workflow are not the same thing. This is the stage at which the production-ready version is established. The Southern Company substation drone deployment described in Part 1 sits here, and so do most of the ongoing utility trials: vegetation-management computer vision, outage-prediction analytics for distribution utilities, early DERMS deployments supporting virtual-power-plant aggregation. Much of the present-day evidence of AI in US utility operations is at stage one.

In the second stage, the matured capability erodes the substantive content of the human task inside the role. The worker is still titled the same way, paid the same way, and counted the same way in the BLS occupational data. What they actually do shifts: from direct judgment to supervision of automated systems, from first-pass analysis to exception handling, from problem-solving to result-validation. The role-level headcount does not change. The composition of the workday does. This stage can persist for a decade or longer without producing a single layoff, because the institutional architecture supporting the role is still intact and has not been directly contested. The July 2025 CAISO pilot with OATI is an early stage-two deployment: a generative-AI tool built into the outage management system, with CAISO's chief information and technology officer framing it in terms of "improving situational awareness and freeing up time for other important tasks" for operators. The dispatcher is still the dispatcher; the work is shifting around them. Similar operator-assist and planning-AI pilots are active at PJM and SPP. Stage-two dynamics are visible in utility control rooms now.

In the third stage, eroded role content begins to weaken the institutional defenses that sustained the role's headcount. The licensing requirements were written against tasks described in language that no longer maps cleanly to what the licensed person actually does. The union contracts codified staffing ratios against work that has substantively changed. The regulatory staffing minimums were built on assumptions about operator workload that the capability deployment itself is stressing. The NERC System Operator Certification Program, which credentials reliability coordinators, transmission operators, and balancing authority operators against specific task competencies, is a candidate stage-three battleground in waiting. The competencies are defined against work that AI-augmented control rooms are visibly reshaping, and the task content that the certification was built to verify is no longer a clean description of what operators actually do day to day. This is the workforce face of the governance-insufficiency problem Brandon Owens diagnoses as the latency gap: as operational judgment migrates into software, the institutional frameworks built around the human exercising that judgment lose their purchase on their original grounds. None of these defenses collapses at once. They attenuate. The arguments for them become harder to make on their original grounds, because the original grounds no longer describe the work that is actually being done.

In the fourth stage, the role-level headcount change follows. When an institutional defense becomes untenable on its original grounds, the change that looked impossible at the outset can arrive quickly. Two corners of the utility workforce appear to have already completed, or nearly completed, a stage-four transition. Meter reading has contracted as advanced metering infrastructure has deployed widely, and the meter-reader role today is significantly smaller than it was a decade ago. Utility customer service representatives are further behind but are under the kind of pressure that has produced stage-four contractions in other industries: a Gartner survey of 321 customer service and support leaders conducted in October 2025 found that 20 percent had already reduced agent staffing due to AI, and the same general mechanism is operating inside utility customer operations, though the pace of compression in utilities specifically has not been independently documented. Neither transition was announced as a stage-four AI event. Both are playing out anyway, on timelines that are fast relative to the long second-and-third-stage periods that preceded them.

Three precedents

Three regulated industries with safety-critical workforces have moved through some version of this arc, and watching where each of them currently sits is the closest available proxy for what the US power sector is moving toward.

Aviation moved from three-person to two-person commercial cockpits in the 1980s as autopilot systems matured and the flight engineer role was absorbed by automation. That was a stage-four event. Since then, the copilot role has been moving through stages two and three for several decades. Airbus and Dassault, the two European large-commercial-aircraft manufacturers, formally proposed extended minimum-crew and single-pilot concepts to European regulators in 2017. A formal rulemaking task on reduced-crew operations was opened. The European Union Aviation Safety Agency study led by the Royal Netherlands Aerospace Center reported in June 2025 that equivalent safety could not be demonstrated at current technology levels, and the rulemaking was paused pending further technological development. The institutional defense is currently holding. It is holding under explicit pressure from manufacturers and operators with a clear commercial interest in the change. Institutions in safety-critical industries do not break; they erode, and then they give way.

Freight rail sits in a different position on the same arc. The institutional defense in rail has been actively contested in both directions over the past decade. The Federal Railroad Administration issued a final rule in April 2024 codifying a two-person minimum crew requirement for freight operations, responding in part to the East Palestine derailment and to a long campaign by SMART-TD and BLET. The rule includes carve-outs for certain operations and a special-approval process for one-person crew petitions. The Association of American Railroads and several Class I carriers challenged the rule, and the consolidated case is currently before the 11th Circuit Court of Appeals. A 2025 nullification bill was introduced in the House. Rail is a case where the institutional defense was strengthened by regulatory action even as the underlying technological capability has continued to mature. The resulting tension is producing visible legal and political contestation rather than smooth attenuation. Both directions of stage three are active simultaneously.

Maritime is moving along the same arc on a different international timeline. The International Maritime Organization's non-mandatory MASS Code for Maritime Autonomous Surface Ships is on track for adoption in May 2026, with a mandatory code targeted for 2032. Norway, China, and Japan have advanced trials of varying autonomy levels for short-route operations. The current state is closer to formal regulatory framework-building than to commercial deployment, but the international institutional architecture is moving toward accommodating reduced and remote crewing on a defined timeline. Maritime is in earlier stage three than aviation, with the institutional path toward stage four more clearly mapped than in either aviation or rail.

The lesson from all three is the same. The institutional defenses around safety-critical workforces do not break under direct technological pressure. They erode through long second and third stages of role-content shift, and the eventual stage-four change happens fast relative to the duration of the erosion that preceded it. The timing of the stage-four transition depends less on technology than on the institutional architecture surrounding the role, and the architecture varies country by country, agency by agency, contract by contract.

Mapping onto the US power sector

The four-stage pattern maps onto the workforce matrix from Part 1 with a specific structure. Reliability functions are mostly in stages one and two now and will reach stage four on a five-to-fifteen-year horizon, primarily through coordination-layer consolidation: smaller dispatch teams operating higher-capability systems, smaller market-analysis teams covering broader portfolios, customer-service operations restructured around AI handling first-line interactions, regulatory and compliance functions compressed by LLM-driven drafting and evidence compilation. Safety functions are mostly in stage one now and will pass through stages two and three over the same fifteen-year horizon while maintaining role-level headcount. The work inside those roles will visibly change. The role count will not. The stage-four transition for safety functions arrives on a fifteen-to-thirty-year horizon, and its timing depends on generational turnover in the regulators, operator communities, and legal architecture that sustain the current institutional defenses.

Task-level effects are visible throughout this progression, on a much shorter timeline than role-level effects. A wind technician whose role-level headcount is growing will still experience significant task-level change in the next three to five years. A nuclear operator whose role-level headcount is steady through the 2040s will spend much of the intervening period supervising systems that were not part of the job description when they were licensed. The worker's experience of AI is a task-level experience long before the occupational data registers anything.

That structure is the through-line of the rest of the series.

What this means now

The implications of that timeline arrive at different desks at different times.

For utilities. The most consequential workforce-planning question facing an integrated utility in 2026 is not how to upskill the existing workforce for AI. It is how to plan succession into reliability-function roles whose headcount targets are likely to compress materially over the next decade, even as replacement-cohort hiring continues against the original targets. The utilities that navigate the transition cleanly will be the ones whose workforce plans treat the matrix in Part 1 as the assumption set rather than the current org chart. The parallel implication for training and development investment is that the largest returns will come from task-level retooling inside persistent roles, not from wholesale retraining of workers whose roles are contracting.

For ISOs, RTOs, and state regulators. Reliability-function staffing minimums and tariff structures that were set against the workload of pre-AI operations will increasingly look out of step with what the workload actually is. The institutional pressure to relax those minimums will arrive before the institutional comfort to do so. The strongest regulatory posture is to anticipate the pressure rather than to respond to it after the gap becomes embarrassing. Performance-based regulation focused on system outcomes will hold up better through the transition than rigid input-based staffing rules will. The harder regulatory question is on the safety side, where the temptation to relax human-in-the-loop requirements will eventually arrive but should be resisted until the institutional architecture for verifying equivalent safety is built, not assumed. The NERC operator certification framework in particular will face pressure to reflect changed task content long before it faces pressure to reduce licensed headcount, and the former is a reasonable accommodation while the latter should not be granted on the early edge of institutional comfort.

For investors. The capital-allocation implication is that the binding constraint on AI-driven utility productivity is institutional rather than technological. The companies that will capture the productivity gain are the ones whose internal regulatory, union, and operational governance architectures are built to absorb the transition without litigating each piece of it separately. That is a different screen from what most utility-AI investment frameworks currently use, and it is closer to the screen that worked in industries that have already moved through earlier stages of the same arc. The investment thesis on a utility's AI exposure is more a question about its institutional flexibility than about its vendor relationships.

For workers. The cognitive-AI exposure pattern visible in the Stanford Digital Economy Lab's November 2025 ADP payroll analysis, which finds a 16 percent relative decline in employment for workers aged 22 to 25 in the most AI-exposed occupations, has not yet shown up in utility occupational data. The roles in the utility workforce most exposed to that pattern are dispatchers, market analysts, customer service representatives, and rate case economists. The roles least exposed at the role level are the ones tagged safety in the matrix, though the task content in even those roles is changing. The recommendation that follows is not a general-purpose one; it depends on which row of the matrix the worker sits in and on whether the change most likely to affect that worker is a role-level contraction or a task-level shift. For the most exposed cognitive roles, the entry-level concentration of the AI displacement signal makes early-career adaptability the key variable. For the safety-function roles, the change is real but slower at the role level and will arrive first inside the work itself. The human-in-the-loop requirement will be defended for at least another decade; the content of the work inside that requirement will look very different by the end of it.

What comes next

Part 3 builds out the full role-by-role matrix across NAICS 221100 and the surrounding institutional infrastructure. Roughly forty roles, with the four-stage mechanism applied systematically across the industry.

Part 4 takes up the capability-creation half of the AI-and-robotics story: the new activities that utilities will be able to perform for the first time, rather than the existing activities compressed by automation.

Part 5 returns to strategy with specific recommendations for each audience, and engages directly with the productivity claims in current consultancy framings of AI in utilities. The numbers in those framings support the argument developed here more than they are usually understood to. The workforce implication of the productivity claims is what the consultancy register declines to name.

The translation from AI capability to utility workforce change runs through institutional defenses that hollow out from within rather than break from outside. Task content inside roles shifts first and most visibly. Role-level headcount follows on longer timelines that differ systematically between reliability and safety functions. The decade ahead looks slow until it does not.